While we don’t often think about them, standards of measure are one of the cornerstones of human society. If things aren’t sold by count, as in buying a single orange or twelve eggs, they are sold relative to a certain measure. You might buy 20 ounces of crackers, or one-and-a-half pounds of chicken, or tile and carpet by the square foot.

But, when it comes to paying humans for the work that they do, one of the most common standard measures is the hour. In this system, people get paid not for how much they do, but for how long they do it for. How time is measured has direct economic impact.

So, why is it so dang arbitrary?

While I’ll always defend the foot relative to the meter due to the fact that it can be nicely divided by 3, the meter does win out in objectivity. First, it works nicely with decimals, and second, in its original conception, it was grounded in physical distance, as it was equal to one ten-millionth of the distance between the equator and the North Pole. And while the technical calculation has shifted over time, to being equal to that of a single metal bar, though a specific wavelength of light given off by krypton-86, and finally to a distance traveled by light in a vacuum in an inconceivably tiny fraction of a second, it’s nice to know that that it has a standard beginning, even if the distance between the North Pole and the Equator isn’t that significant on the cosmic scale.

But time, on the other hand, has been less rationally-scrutinized.

What even is a second? Why are there sixty of them in a minute, and sixty of those in an hour?

While the second now has modern formulations based on objective standards, just like the various reformulations of the meter, its origins are much less scientifically-based. The Egyptians got the ball rolling by dividing the day into two sets of twelve hours each. These hours varied in length with the season and the length of daytime and nighttime within those seasons. The Babylonians then adopted a system by which they had 12 hours in a day, with 120 minutes in an hour, and each hour subdivided by 60, and each of those measures divided by 60, and so on until they had a unit of time that was approximately 2 microseconds long.

Early mechanical clock makers aimed for the second as a standard unit, and once they achieved that goal there’s been little change in the length of a second. As it turns out, one of the most common measures of time is just a division of a division of a division of a more standard unit, the day, which is (in theory) based on the rotation of the Earth.

But, then there’s the week, with 7 days in it, which doesn’t quite add up to the 365 that are in a year, even if we leave off that pesky quarter-day that gives us a leap year once every four years. And then there’s the month, which couldn’t be bothered to have a standard number of days in it, ranging from 28 to 31, but only featuring 29 one year in four.

Just as the French gave us metric measurements, they also tried to give us metric time.

Meet the French Republican Calendar. Like the rest of the metric system, it was decimal-based. Each day was divided into ten hours, which were subdivided into 100 minutes, which were subdivided into 100 seconds. As an end result, hours and minutes were much longer than in the system we use, but seconds were a bit shorter.

But, they also reformed the calendar, thinking that the Julian calendar gave the church too much power over the populace. Each week now had ten days, and each month had 3 weeks, adding up to a standard 30 days each. The remainder were made up as holidays at the end of each year. Five days were added in standard years, and six in leap years.

At the time, under the Julian Calendar laborers got one day off in every seven. Under the French Republican Calendar, they got one full day off and one half-day off in every ten. Over the course of the year, this system would add about 2.5 extra off days relative the Julian calendar, but the extra length between full days off would prove quite unpopular and was part of the reason that the calendar was ultimately scrapped. 1

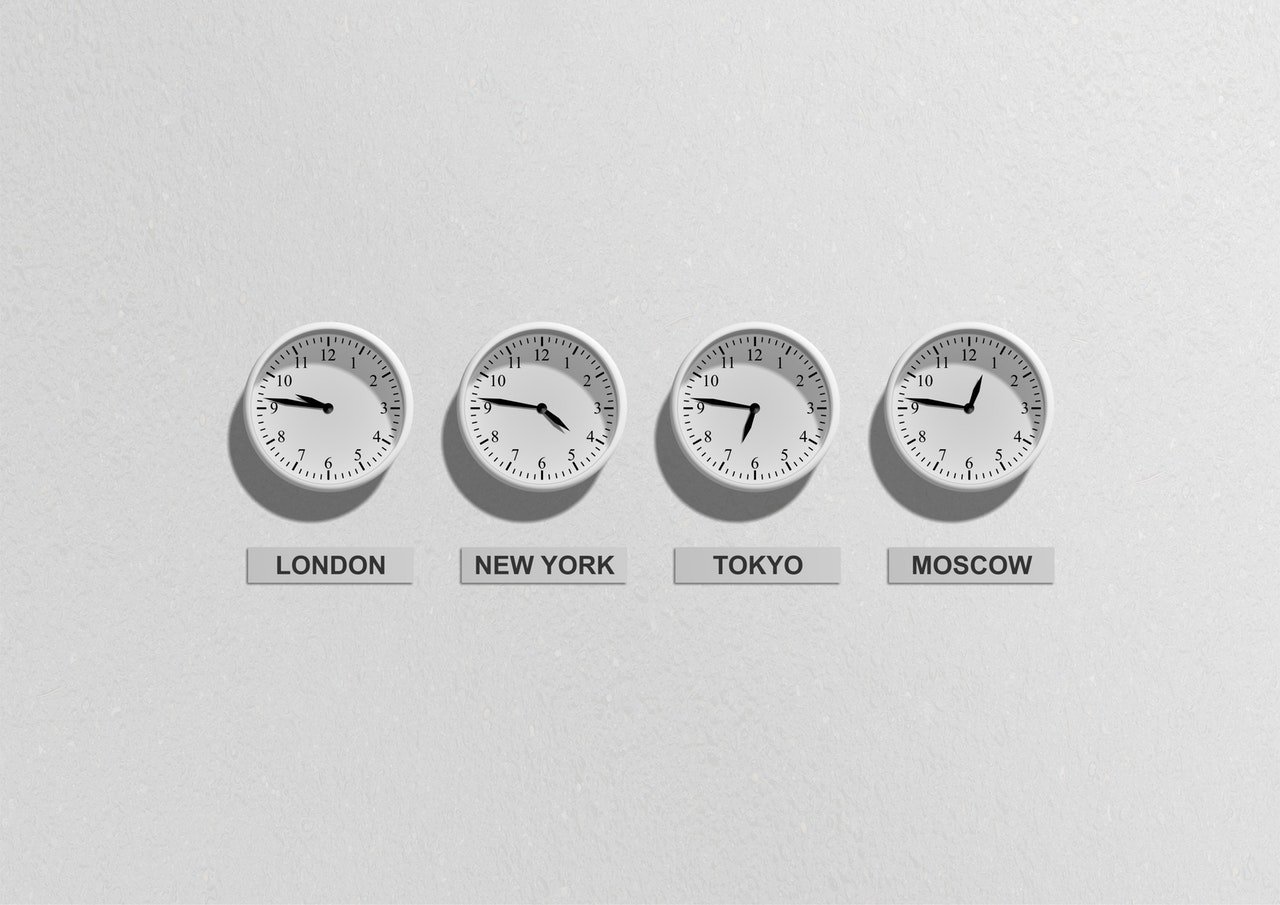

And, then there’s another attempt at universal time coordination, known as Coordinated Universal Time (confusingly abbreviated as UTC). This is the system that sets time zones around the world. In theory, a place’s location should determine what time it is in that place. In practice, it gets quite messy.2

First, the United States: Here, we can already see the pitfalls of letting cities choose which time zone they fall in. The border between the Mountain Time Zone and the Pacific Time Zone is especially infuriating.

Here, we can already see the pitfalls of letting cities choose which time zone they fall in. The border between the Mountain Time Zone and the Pacific Time Zone is especially infuriating.

It gets worse as we travel around the world.

In Russia, for instance, one can move a few miles west and suddenly find the time is two hours later, or move a few miles south, find it is one hour later, then move the same distance west and find it hasn’t changed any further:

And then there’s mainland Europe, which doesn’t really have an excuse:

In particular, I’m looking at France and Spain, which are either a majority or entirely not in the time zone they claim to practice.

I was going to give them grief, but then I discovered that like almost everything, you can blame Nazis for this. When the Germans occupied France, they standardized the local time so that it matched their own, which made train travel between the two countries easier. And apparently the dictator Francisco Franco in Spain thought it would be good to match up time with the Germans after they invaded France.

After the war, neither country bothered switching back.

So, there you have it, A Short History of Time if you will. It seems that no matter how much we try to standardize time, it somehow falls short of being as standardized as the meter. Somehow, we can’t even get our days and weeks to line up with our years. Does it matter? Probably not. While it seems we’ve failed in this regard in our obsessive quest for perfection, at this point it seems that changing to a better system might cause disruption that outweighs any potential benefits.

If you liked this article, be sure to check out:

Can the President Block you on Twitter?

Why Can’t we Stop the Flu?

How Many Backup Planes do Airlines Have?

The underlying issue it seems is that we are shooting for an arbitrary target. The motion of the sun around the earth seems rather arbitrary, doesn’t it? Why should we make mark one revolution?

And just to shove more of the same onto your mind: what are the chances that a day could possibly be exactly 24 hours long, even if we are consistent? And even if they are 24 hours long right now, what about entropy and the energy lost in the motion of the earth? Even if we were at 24 hours, the likelihood of us continuing to rotate at 24 is non-existent. We are losing a small amount of angular velocity each day, negligible now, but not forever.

Woo! Existential dread!